Metric Convergence: Is the Tournament Becoming a Monoculture?

Rolling cross-metric correlations show MMC-CORJ60 climbing from 0.35 to 0.55 over 800 rounds while cross-sectional dispersion dropped 40%, suggesting the Numerai tournament field is converging on a narrowing set of strategies.

Numerai's scoring system rewards diversity. MMC pays for originality, CORJ60 for accuracy, BMC for benchmark contribution, FNCv3 for feature-neutral signal. If these metrics move independently across the field, the tournament has genuine strategic breadth. If they become more correlated, the field may be narrowing toward a smaller set of successful approaches.

This post measures convergence from three angles: cross-metric correlation, cross-sectional dispersion, and residual originality.

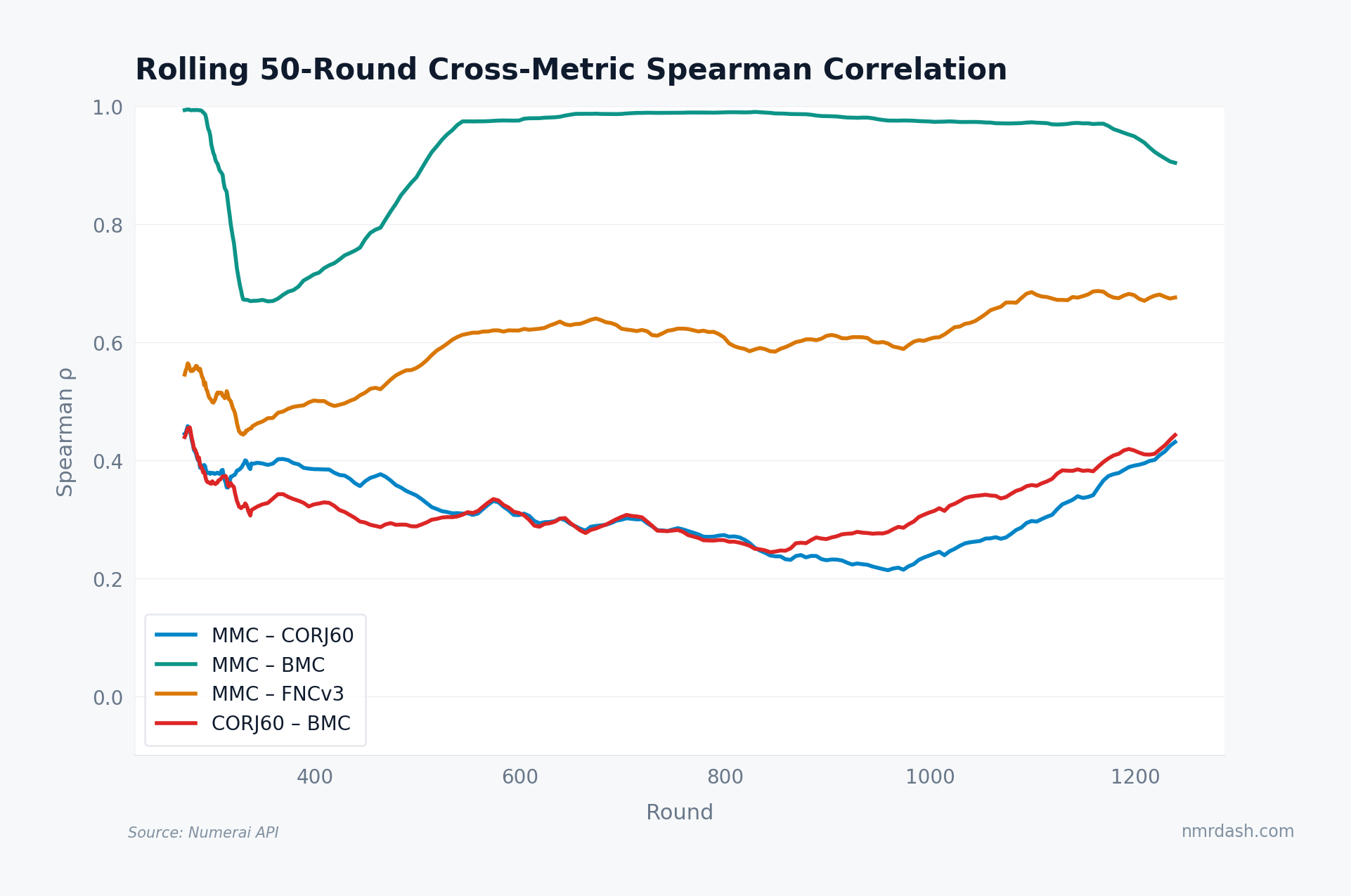

Cross-Metric Correlation: Are the Metrics Collapsing?

For each round, compute the Spearman rank correlation between every pair of primary metrics across all staked models. A rolling 50-round average smooths the noise and reveals the trend.

The MMC-CORJ60 correlation climbed from roughly 0.35 around round 300 to 0.55 by round 1100 — a steady upward drift indicating that round-level rankings on CORJ60 and MMC increasingly agree. MMC-BMC tracks a similar path but at a higher baseline; in recent rounds it sits near 0.80 across the whole field — meaning a model's "originality" score and its "benchmark contribution" score are now nearly the same number, even though they were designed to capture different things.

When metrics designed to measure different qualities start agreeing, the scoring system is rewarding a narrower band of approaches than it once did.

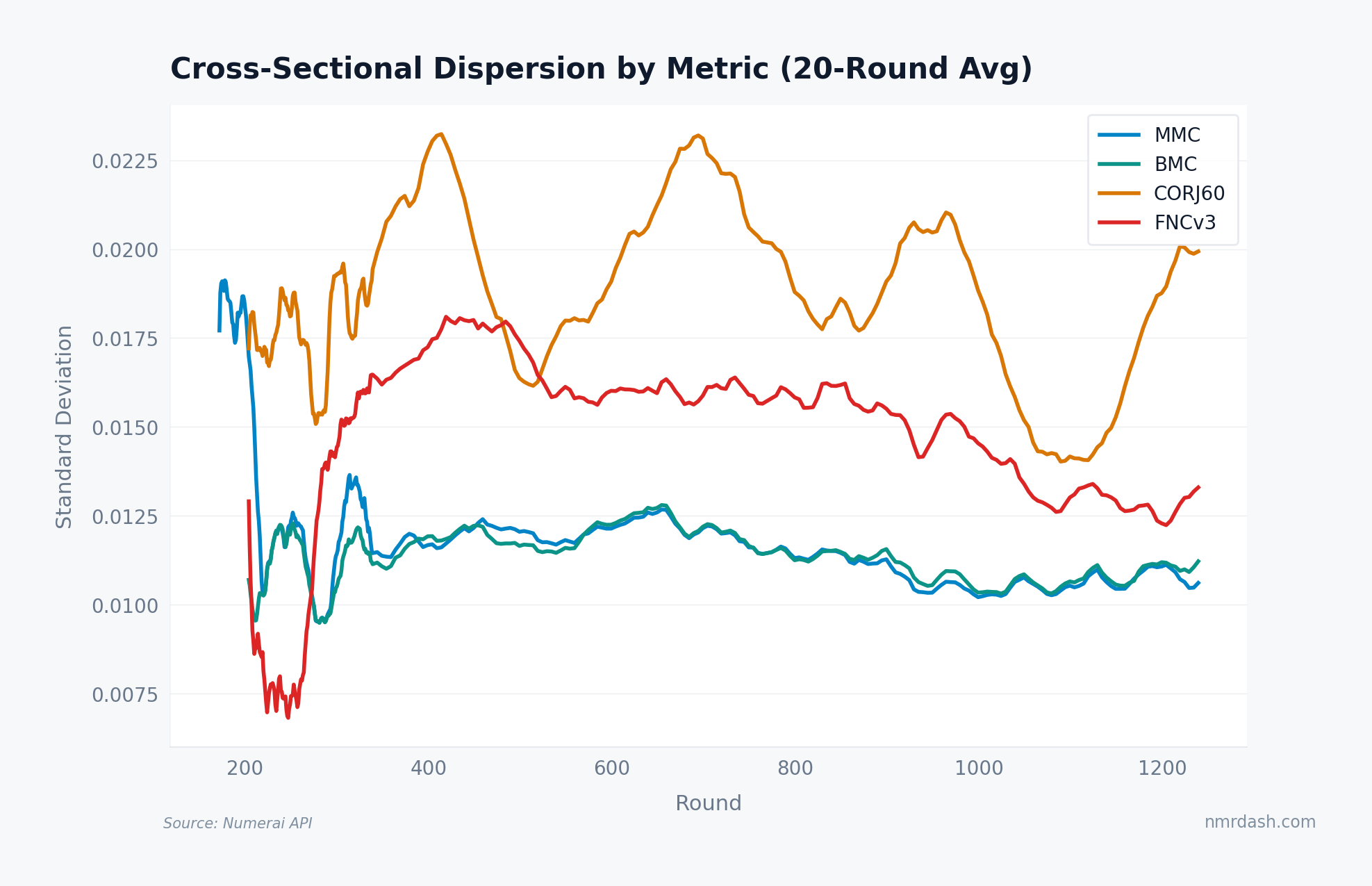

Dispersion: Is the Field Narrowing?

Cross-sectional standard deviation measures how spread out model scores are within each round. High dispersion means varied strategies; low dispersion means bunching.

MMC dispersion dropped from roughly 0.035 in round 300 to 0.022 in recent rounds — a 37% compression. CORJ60 dispersion fell from 0.028 to 0.018 over the same stretch. BMC and FNCv3 followed similar paths, with BMC showing the steepest decline after round 600. The field is measurably tighter across every primary metric.

Narrowing dispersion is consistent with a diversification paradox: as more participants enter and learn from the same public resources, feature sets, and target definitions, their predictions regress toward a common signal. New models join the tournament but many arrive with approaches that look like what already exists, pushing dispersion down even as nominal participation grows.

Residual IC: Is Non-Meta-Model Signal Shrinking?

Residual IC (RIC) strips out the meta-model component and measures what unique signal each model contributes. If RIC is declining, the original thinking that MMC is supposed to reward is getting scarcer.

Median RIC drifted from roughly 0.012 around round 350 to 0.007 in recent rounds — a 42% decline. The stake-weighted mean fell more steeply, from 0.015 to 0.009, meaning the largest stakers show the fastest decline in residual signal by this measure. That compounds the concentration problem at the top of the stake distribution.

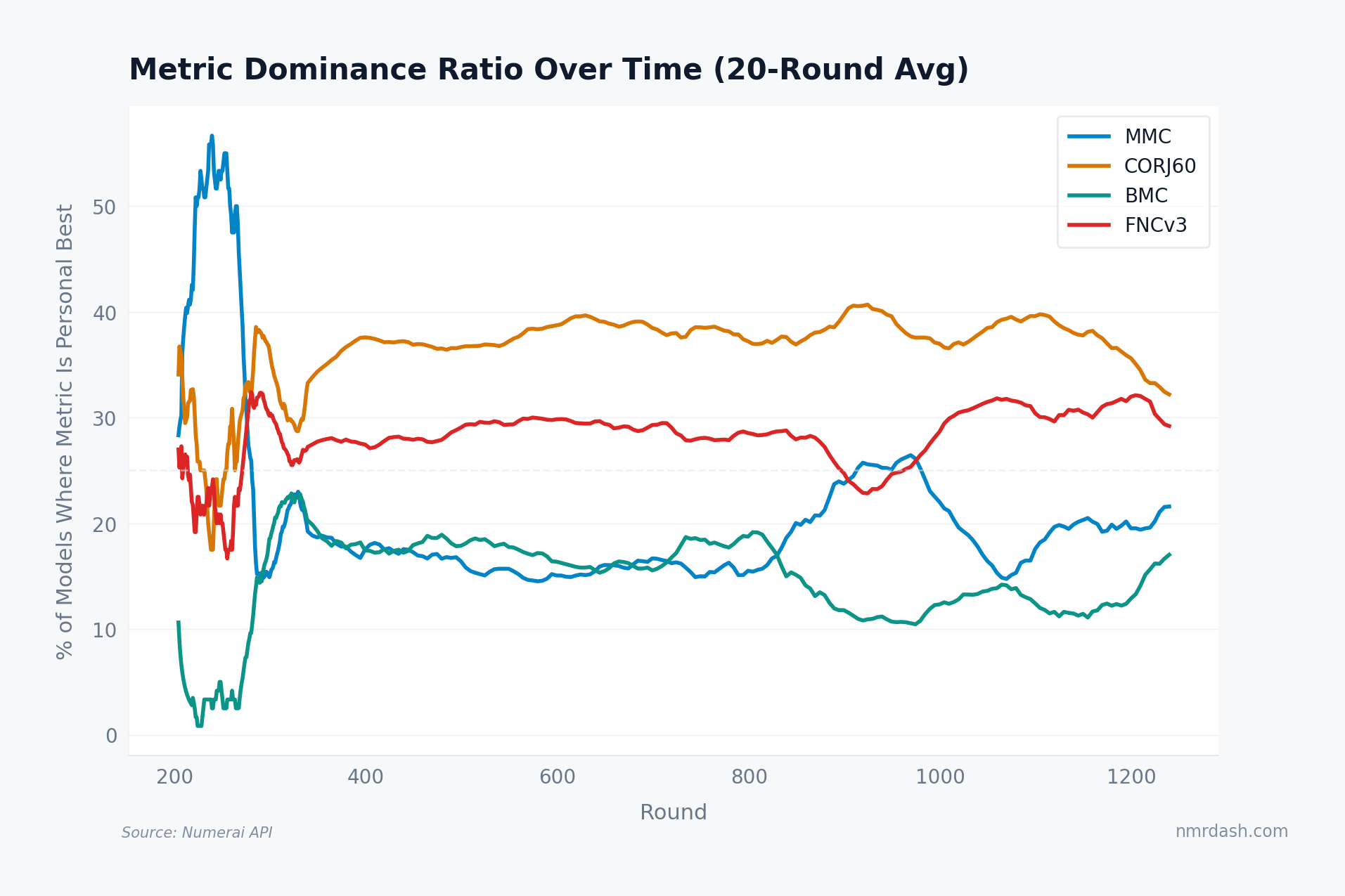

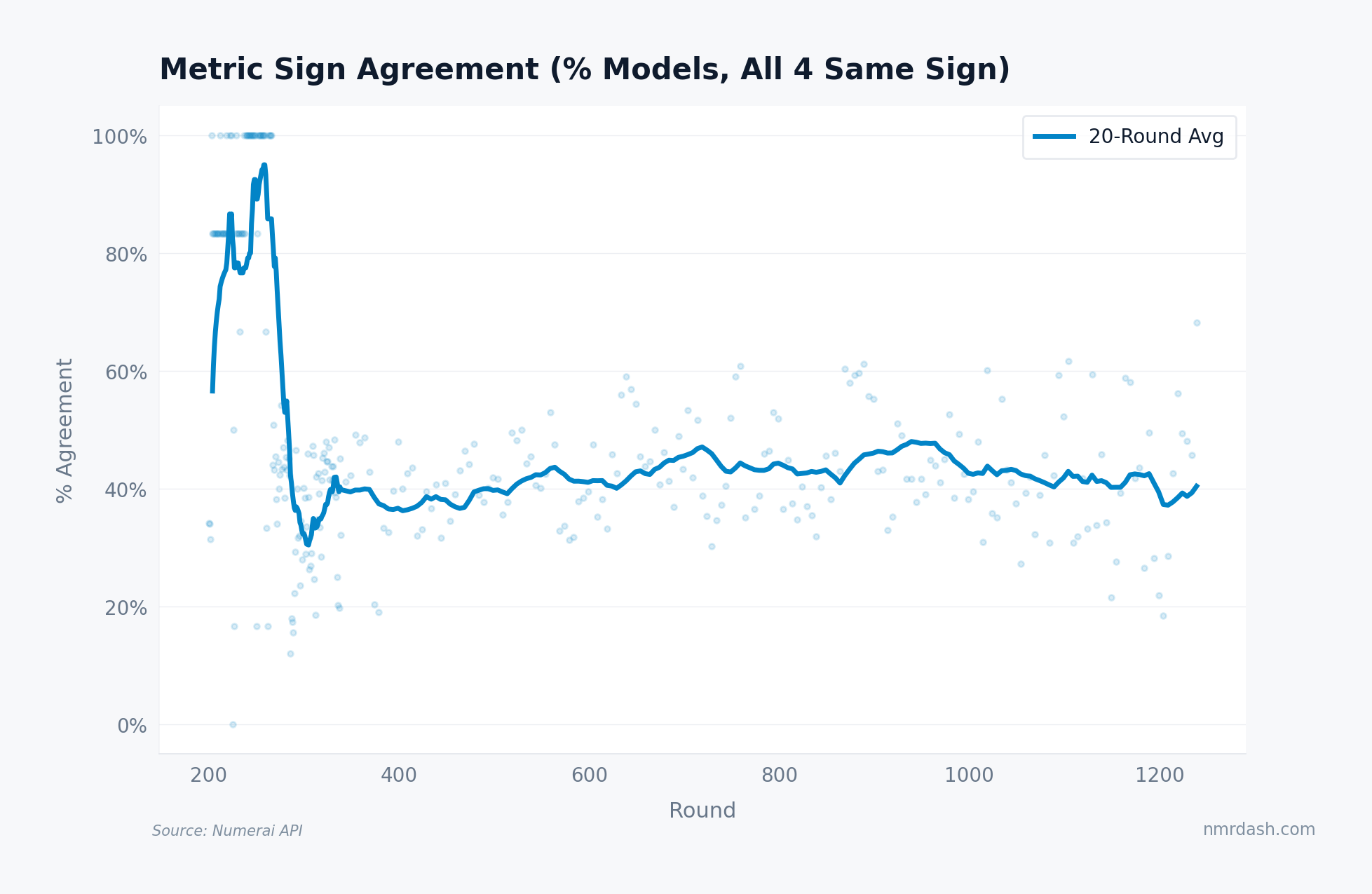

Metric Agreement: The Monoculture Test

The sharpest monoculture signal is sign agreement: what percentage of models have all four primary metrics pointing the same direction (all positive or all negative) in a given round? In a diverse tournament, metrics should frequently disagree for individual models.

Sign agreement climbed from roughly 45% in round 300 to 62% in recent rounds. Nearly two-thirds of models now have all four metrics pointing the same way. For a growing share of models, positive and negative metric signs now move together.

The climb is gradual but persistent. No single round marks a regime change; it reflects the slow accumulation of shared knowledge, converging feature pipelines, and the gravitational pull of public notebooks and benchmark approaches.

Takeaways

The metrics are converging. MMC-CORJ60 correlation rose from 0.35 to 0.55, and all primary metric pairs are trending upward. The scoring system now shows a more correlated performance profile than it once did.

Dispersion is compressing across the board. Cross-sectional spread dropped 35-40% on every primary metric. Models are producing more similar outputs, round after round.

Residual originality is declining fastest among top stakers. Stake-weighted RIC fell 40% over 800 rounds. The models that carry the most weight in the meta-model are the ones converging most aggressively.

Two-thirds of models now agree on sign across all four metrics. That leaves only a third of the field where the metrics meaningfully disagree — the segment most likely contributing unique signal. If you stake, the bet worth making is on a model whose metrics disagree on direction in a given round, not on one that lights up everything green at once. Track these trends on the Trends page and per-round detail in Rounds.