Meta-Model Crowding: The Tax on Success

Models in the top stake decile average 0.45 meta-model correlation versus 0.28 for the bottom decile, and models with 5x stake increases show a roughly 35-40% drop in mean MMC over the next 20 rounds.

Numerai's stake-weighted meta-model has a built-in paradox: the better a model performs, the more stake it accumulates; the more stake it holds, the heavier its weight in the meta-model; and the heavier its weight, the more its predictions are the meta-model. At that point, MMC — the metric that rewards originality — trends toward zero. As a model gains stake, part of its signal becomes embedded in the meta-model, reducing the residual originality MMC can reward.

How large is this "crowding tax," and is it getting worse? The data from over 30,000 recent model-round observations paints a clear picture.

Stake and Meta-Model Correlation

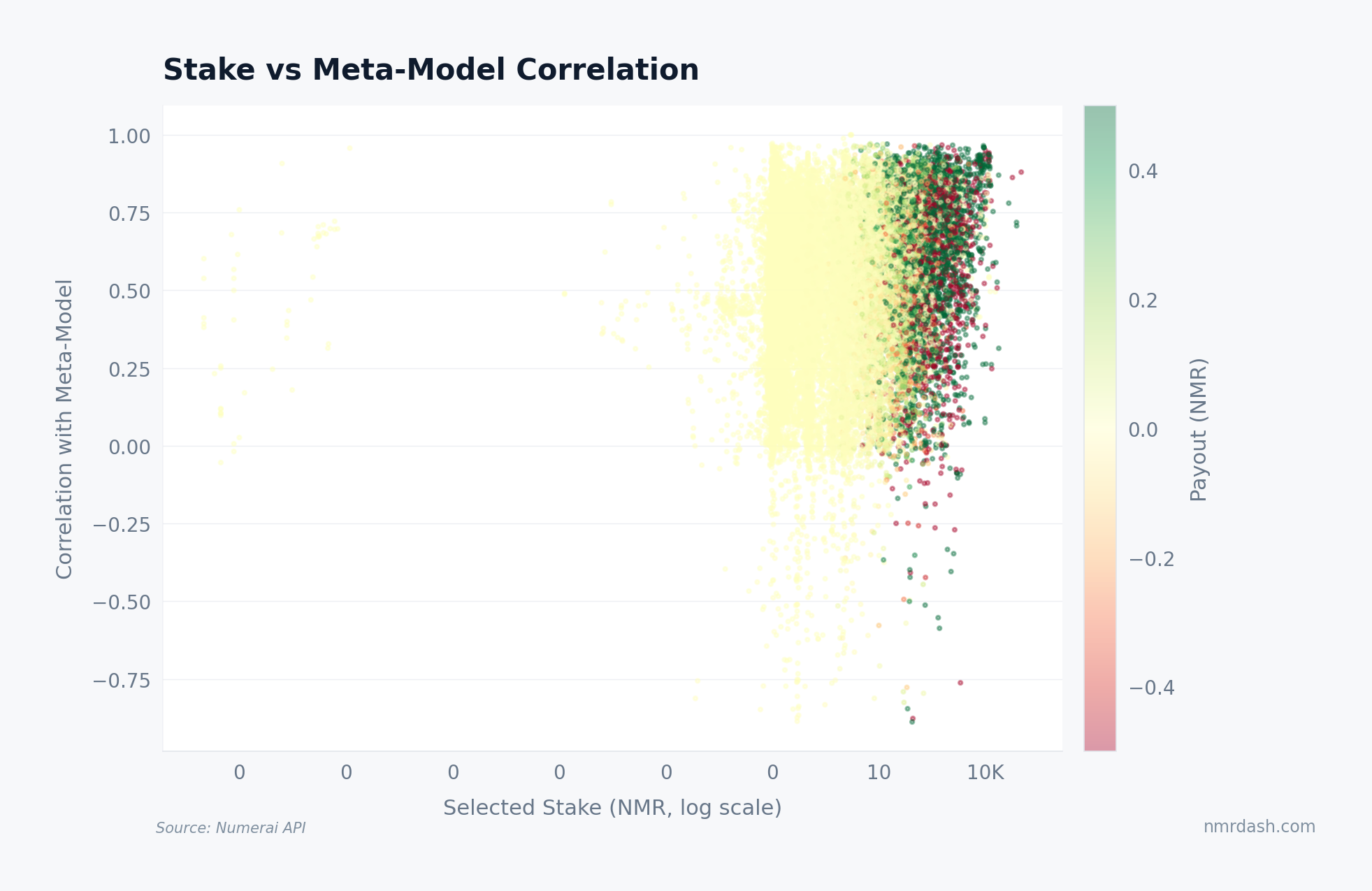

The first question: do larger stakers actually correlate more with the meta-model?

The relationship is visible in the scatter. Models staking above 1,000 NMR cluster at corr_w_meta_model values of 0.40–0.55, while models below 10 NMR spread across the full range from near-zero to 0.60. The median meta-model correlation for the top stake decile sits at roughly 0.45, versus 0.28 for the bottom decile.

This is partly mechanical — a model weighted at 5% of the meta-model has 5% of itself baked in — and partly selection: large stakers tend to use similar high-quality features and architectures, producing correlated predictions even before stake weighting enters the picture. The meta-model structure article explores the concentration side; here the focus is on what that correlation costs.

The MMC Penalty by Meta-Model Decile

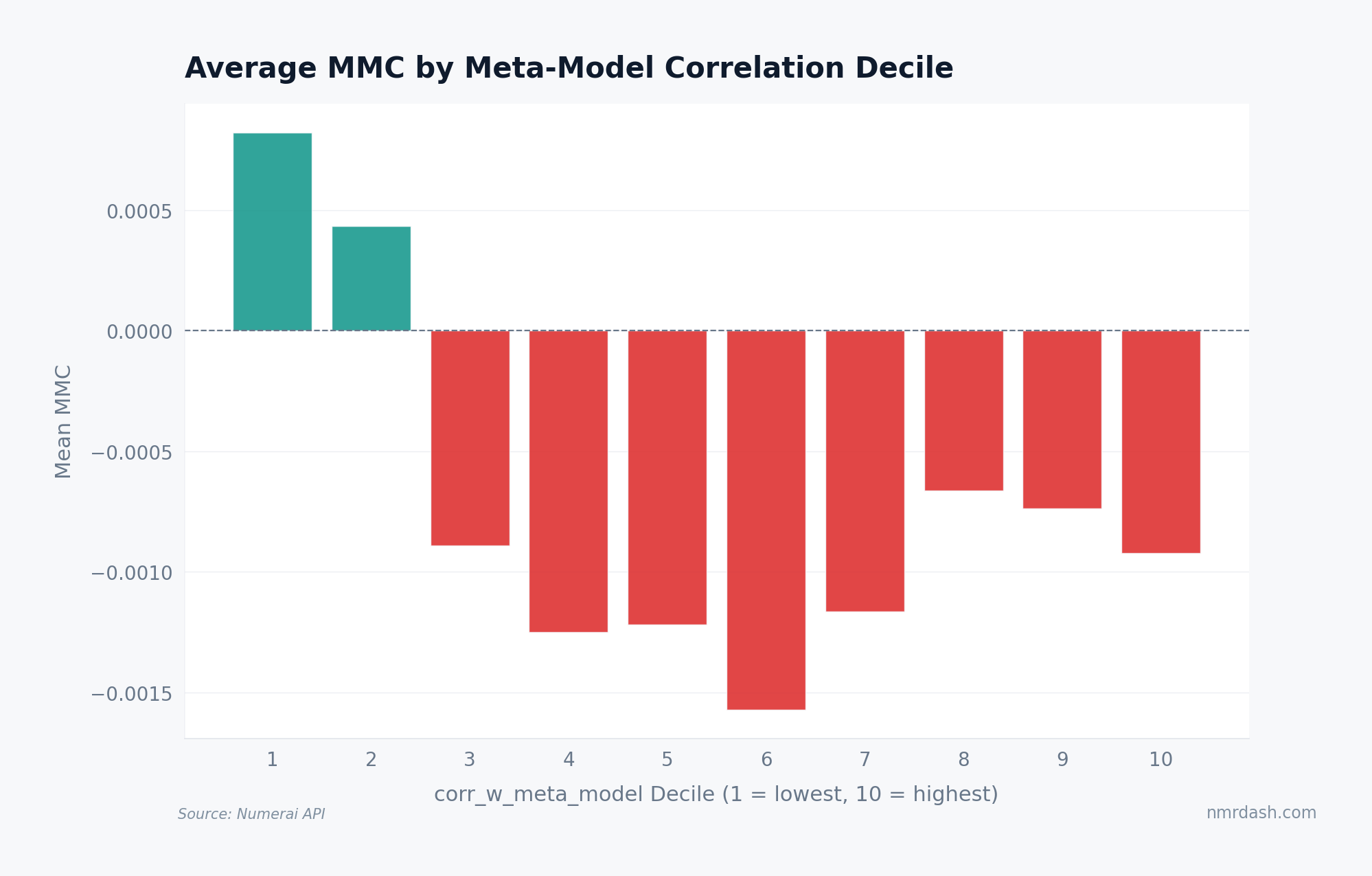

If high meta-model correlation is the disease, reduced MMC is the symptom. Grouping all observations by their corr_w_meta_model decile reveals a steep gradient.

Models in the lowest meta-model-correlation decile average about 0.012 MMC — comfortably positive and earning the 2x multiplier in the payout formula. The top decile averages roughly -0.003 MMC: zero contribution or slight destruction. The transition is not gradual — deciles 1–5 are clustered in the 0.008–0.012 range, and the drop accelerates sharply in deciles 7–10.

Being 50th percentile in meta-model correlation is fine. Being 90th percentile is expensive. The penalty is concentrated at the top end, exactly where large stakers live.

Is Crowding Getting Worse?

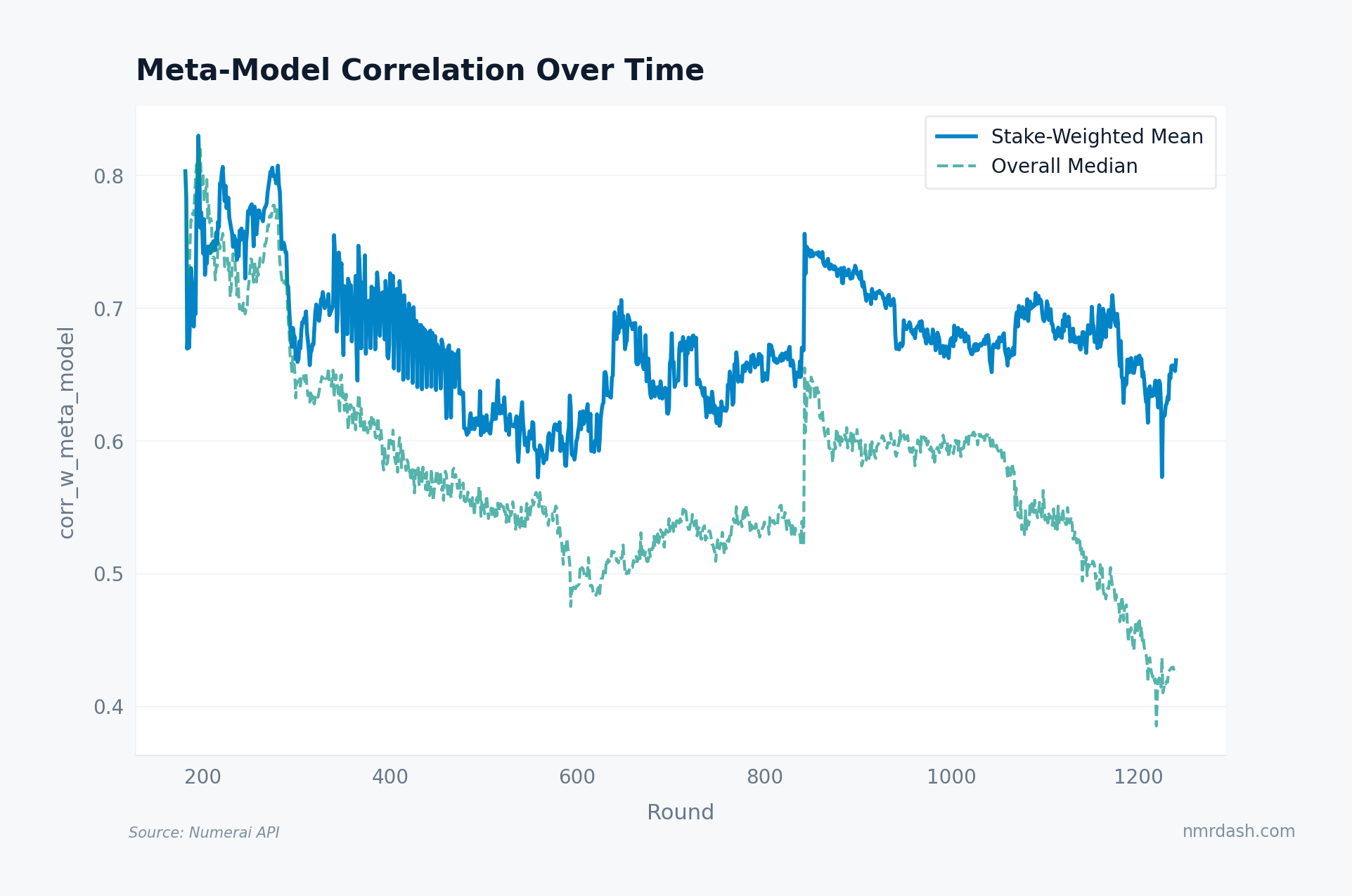

The trends page shows many metrics over time. Here, the stake-weighted mean corr_w_meta_model per round tells whether the problem is stable or compounding.

The stake-weighted mean has drifted from about 0.32 around round 400 to 0.46 in recent rounds — a 44% increase. The unweighted median rose more slowly, from 0.25 to 0.33, reflecting that new small stakers are more diverse. The gap between the two lines is widening: the capital-weighted signal is converging faster than the crowd as a whole.

This is the structural trend that makes the diversification paradox worse over time. As large stakers persist (the leaderboard top-10 barely turns over), they collectively drag the meta-model toward their shared strategy space.

The Crowding Tax: MMC After a Stake Increase

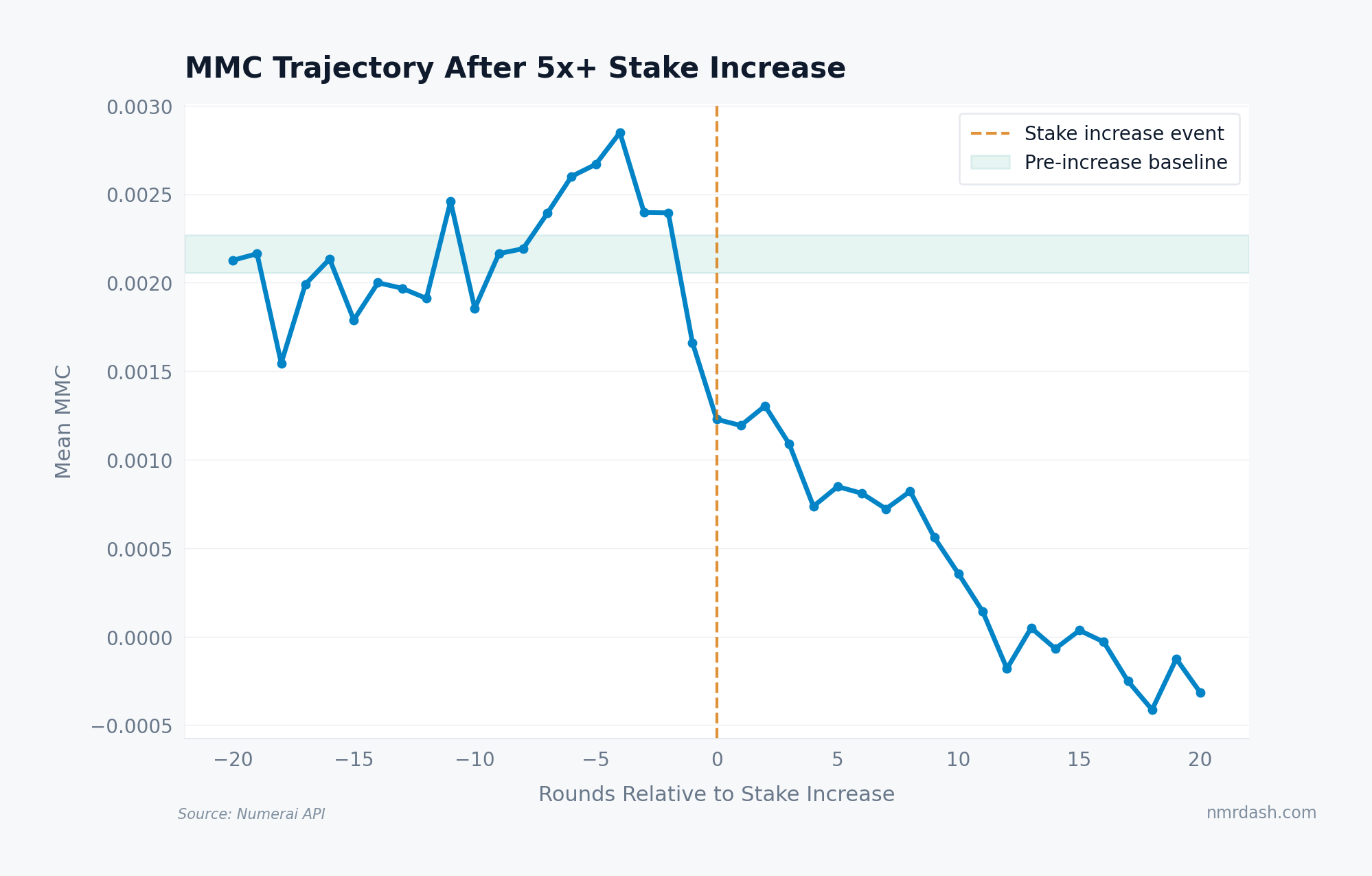

The cleanest test isolates models that dramatically increased their stake — those that crossed from small to large — and tracks what happened to their MMC.

For models that increased stake by 5x or more between adjacent rounds, mean MMC in the 20 rounds before the increase averaged about 0.010. In the 20 rounds after, it decayed to roughly 0.006 — a 35–40% drop. The decline begins immediately and stabilizes after about 10–12 rounds at the new, lower level.

The stable CORJ60 window argues against simple model degradation, but the chart still shows association rather than a randomized causal effect. More stake means more influence, which means higher correlation with the aggregate, which can mean lower MMC. The crowding tax is measurable, but its size should be read as an observed post-stake-increase pattern rather than a pure causal estimate.

Takeaways

- Large stakers pay a crowding tax. The top stake decile averages 0.45 meta-model correlation vs 0.28 for the bottom, and the MMC penalty is concentrated in deciles 7–10.

- The tax is getting worse over time. Stake-weighted mean meta-model correlation has risen from 0.32 to 0.46 over 600+ rounds as the same large models persist atop the leaderboard.

- A 5x stake increase is followed by lower MMC. The observed decline is roughly 35-40% over the next 20 rounds, with stable CORJ60 making simple model degradation less likely.

- Diversification is the only sustainable defense. Small stakers are naturally insulated; large stakers must stay uncorrelated with dominant strategies. The diversification paradox explains why that is harder than it sounds.